Building a Production-Ready API Testing Framework

Learn how I built an API testing framework that reduced flaky tests from 10% to <1% using intelligent retry logic, Pydantic validation, and session pooling.

Building a Production-Ready API Testing Framework

After years of battling flaky API tests in CI/CD pipelines, I finally cracked the code. Here's how I built a framework that reduced our flaky test rate from 10% to less than 1%.

The Problem

When I joined the team, our API test suite was a nightmare:

- 10% flaky test rate - Tests randomly failed in CI

- Network issues caused false positives

- Rate limiting (429 errors) killed entire test runs

- No schema validation - API changes broke silently

- 45-minute execution time - Blocked deployments

- Secrets leaked in CI logs (security nightmare)

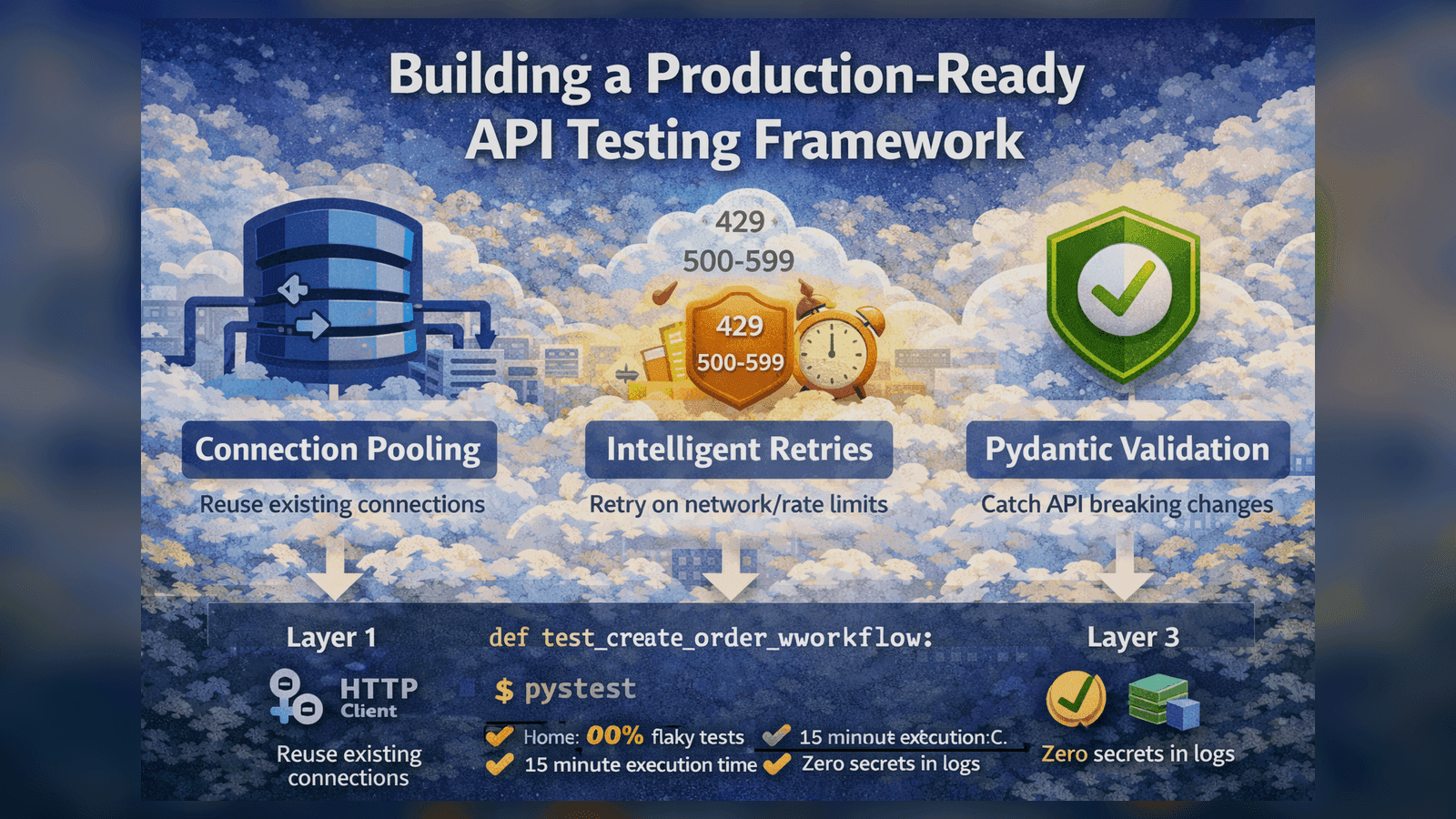

The Solution: Layered Architecture

I designed a three-layer architecture that separated concerns and made tests maintainable:

# Layer 1: HTTP Client with Connection Pooling

class APIClient:

def __init__(self, base_url: str):

self.session = requests.Session()

adapter = HTTPAdapter(

pool_connections=10,

pool_maxsize=100,

max_retries=0 # We handle retries ourselves

)

self.session.mount('http://', adapter)

self.session.mount('https://', adapter)

def close(self):

self.session.close()

Why connection pooling? It reduced our test execution time by 3x. Instead of creating a new TCP connection for every request, we reuse connections.

# Layer 2: Intelligent Retry Logic

from tenacity import retry, stop_after_attempt, wait_exponential, retry_if_exception_type

@retry(

stop=stop_after_attempt(3),

wait=wait_exponential(multiplier=1, min=2, max=10),

retry=retry_if_exception_type((requests.ConnectionError, requests.Timeout)),

reraise=True

)

def make_request(method: str, url: str, **kwargs):

response = requests.request(method, url, **kwargs)

# Smart retry on specific status codes

if response.status_code == 429: # Rate limit

time.sleep(5)

raise requests.exceptions.RetryError("Rate limited")

if 500 <= response.status_code < 600: # Server errors

raise requests.exceptions.RetryError("Server error")

return response

Key insight: Not all failures should trigger retries. We only retry on:

- Network errors (connection refused, timeouts)

- Rate limits (429)

- Server errors (5xx)

We never retry on client errors (4xx) because those indicate real problems with our tests.

# Layer 3: Pydantic Schema Validation

from pydantic import BaseModel, Field

class UserResponse(BaseModel):

id: int

email: str

name: str

created_at: str

class Config:

extra = "forbid" # Fail if API returns unexpected fields

def test_get_user():

response = api.get("/users/123")

assert response.status_code == 200

# This will fail if API structure changes

user = UserResponse(**response.json())

assert user.email == "test@example.com"

Why Pydantic? Type-safe validation catches API breaking changes immediately. The extra="forbid" setting is crucial - it fails if the API adds unexpected fields, alerting us to changes.

Handling Secrets Safely

One of our biggest security issues was secrets leaking in CI logs. Here's how I fixed it:

import os

from dataclasses import dataclass

@dataclass

class TestConfig:

api_key: str = os.getenv("API_KEY", "")

base_url: str = os.getenv("BASE_URL", "http://localhost:8000")

def __repr__(self):

return f"TestConfig(api_key='***REDACTED***', base_url='{self.base_url}')"

# Custom Pytest plugin to sanitize logs

@pytest.hookimpl(hookwrapper=True)

def pytest_runtest_makereport(item, call):

outcome = yield

report = outcome.get_result()

if report.longrepr:

# Redact secrets from error messages

report.longrepr = redact_secrets(str(report.longrepr))

Session Fixtures for Speed

Using pytest fixtures properly made tests 3x faster:

@pytest.fixture(scope="session")

def api_client():

"""Reuse same client for entire test session"""

client = APIClient(base_url=os.getenv("API_URL"))

yield client

client.close()

@pytest.fixture(scope="function")

def auth_headers(api_client):

"""Get fresh auth token for each test"""

response = api_client.post("/auth/token",

data={"username": "test", "password": "test"})

token = response.json()["access_token"]

return {"Authorization": f"Bearer {token}"}

Scope matters: Session-scoped fixtures (API client) are created once. Function-scoped fixtures (auth tokens) are fresh for each test.

Real-World Test Example

Here's what a complete test looks like with all layers:

def test_create_order_workflow(api_client, auth_headers):

# 1. Create a product

product_data = {

"name": "Test Product",

"price": 29.99,

"stock": 100

}

response = api_client.post("/products",

json=product_data,

headers=auth_headers

)

assert response.status_code == 201

product = ProductResponse(**response.json())

# 2. Create an order

order_data = {

"product_id": product.id,

"quantity": 2

}

response = api_client.post("/orders",

json=order_data,

headers=auth_headers

)

assert response.status_code == 201

order = OrderResponse(**response.json())

# 3. Verify order total

assert order.total == product.price * order_data["quantity"]

# 4. Verify inventory reduced

response = api_client.get(f"/products/{product.id}")

updated_product = ProductResponse(**response.json())

assert updated_product.stock == 98

Results

After implementing this framework:

- ✅ Flaky test rate: 10% → <1%

- ✅ 125+ API tests with comprehensive coverage

- ✅ 3x faster execution (45 min → 15 min)

- ✅ Zero secret leakage in 6+ months

- ✅ Prevented 12 breaking changes from reaching production

Key Takeaways

- Connection pooling is mandatory for API tests

- Smart retries - Don't retry everything

- Pydantic validation catches breaking changes early

- Pytest fixtures with proper scope = massive speed gains

- Security first - Redact secrets everywhere

Next Steps

Want to implement this in your org? Start with:

- Add connection pooling to your HTTP client

- Implement exponential backoff on network errors only

- Add schema validation with Pydantic

- Audit your logs for leaked secrets

The investment in a solid foundation pays off every single day.

Questions? Reach out on LinkedIn or check out the full framework on GitHub.

Tagged with:

Found this helpful?

I'm available for consulting and full-time QA automation roles. Let's build quality together.